This guest blog post is by Dr Daniel Turner, a qualitative researcher and Director of Quirkos, a simple and visual software tool for qualitative analysis. It’s based on collaborative research with Claire Grover, Claire Lewellyn, and the late Jon Oberlander at the Informatics department, University of Edinburgh with Kathrin Cresswell and Aziz Sheikh from the Usher Institute of Population Health Sciences and Informatics, University of Edinburgh. The project was part funded by the Scottish Digital Health & Care Institute.

Can a computer do qualitative analysis?

It seems that everywhere we look researchers are applying machine learning (ML) and artificial intelligence (AI) to new fields. But what about qualitative analysis? Is there a potential for software to help a researcher in coding qualitative data and understanding emerging themes and trends from complex datasets?

Firstly, why would we want to do this? The power of qualitative research comes from uncovering the unexpected and unanticipated in complex issues that defy easy questions and answers. Quantitative research methods typically struggle with these kind of topics, and machine learning approaches are essentially quantitative methods of analysing qualitative data.

However, while machines may not be ready to take the place of a researcher in setting research questions and evaluating complex answers, there are areas that could benefit from a more automated approach. Qualitative analysis is time consuming and hence costly, and this greatly limits the situations in which it is utilised. If we could train a computer system to act as a guide or assistant for a qualitative researcher wading through very large, long or longitudinal qualitative data sets, it could open many doors.

Few qualitative research projects have the luxury of a secondary coder who can independently read, analyse and check interpretations of the data, but an automated tool could perform this function, giving some level of assurance and suggesting quotes or topics that might have been overlooked.

Qualitative research could use larger data sources if a tool could at least speed up the work of a human researcher. While we aim in qualitative research to focus on the small, often this means focusing on a very small population group or geographical area. With faster coding tools we could design qualitative research with the same resources that samples more diverse populations to see how universal or variable trends are.

It also could allow for secondary analysis: qualitative research generates huge amounts of deep detailed data that is typically only used to answer a small set of research questions. Using ML tools to explore existing qualitative data sets with new research questions could help to get increased value from archived and/or multiple sets of data.

I’m also very excited about the potential for including wider sources of qualitative data in research projects. While most researchers go straight to interviews or focus groups with respondents, analysing policy or media on the subject would help gain a better understanding of the culture and context around a research issue. Usually this work is too extensive to systematically include in academic projects, but could increase the applicability of research findings to setting policy and understanding media coverage on contentious issues.

With an interdisciplinary team from the University of Edinburgh, we performed experiments with current ML tools to see how feasible these approaches currently are. We tried three different types of qualitative data sets with conventional ‘off-the-shelf’ Natural Language Processing tools to try and do ‘categorisation’ tasks where researchers had already given the ‘topics’ or categories we wanted extracts on from the data. The software was tasked with assessing which sentences were relevant to each of the topics we defined. Even in the best performing approach there was only an agreement rate of ~20% compared to how the researchers had coded the data. However this was not far off the agreement rate of a second human coder, who was not involved with the research project, did not know the research question, just the categories to code into. In this respect the researcher was put in the same situation as the computer.

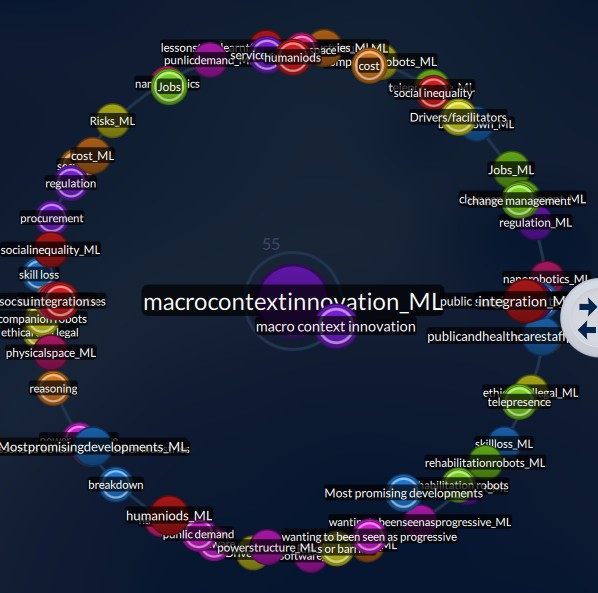

Figure 1: Visualisations in Quirkos allow the user to quickly see how well automated coding correlates with their own interpretations

The core challenge comes from the relatively small size of qualitative data sets. ML algorithms work best when they have thousands, or even millions of sources to identify patterns in. Typical qualitative research projects may only have a dozen or less sources, and so the approaches give generally weak results. However, the accuracy of the process could be improved, especially by pre-training the model with other related datasets.

There are also limitations to the way the ML approaches themselves work – for example there is no way at the moment to input the research questions into the software. While you can provide a coding framework of topics you are interested in (or get it to try and guess what the categories should be) you can’t explain to the algorithm what your research questions are, and so what aspects of the data is interesting to you. ML might highlight how often your respondents talked about different flavours of ice cream, but if your interest is in healthy eating this may not be very helpful.

Finally, even when the ML is working well, it’s very difficult to know why: ML typically doesn’t create a human readable decision tree that would explain why it made each choice. In deep learning approaches, where the algorithm is self-training, the designers of the system can’t see how works, creating a ‘black box’. And this is problematic because we can’t see their decision making process, and tell if a few unusual pieces of data are skewing the process, or if it is making basic mistakes like confusing the two different meanings of a word like ‘mine’.

There is a potential here for a new field: one which meets the quantitative worlds of big data with the insight from qualitative questions. It’s unlikely that these tools will remove the researcher and their primary role in analysis, and there will always be problems and questions that are best met with a purely manual qualitative approach. However, for the right research questions and data sets, it could open the door to new approaches and even more nuanced answers.

2 pings